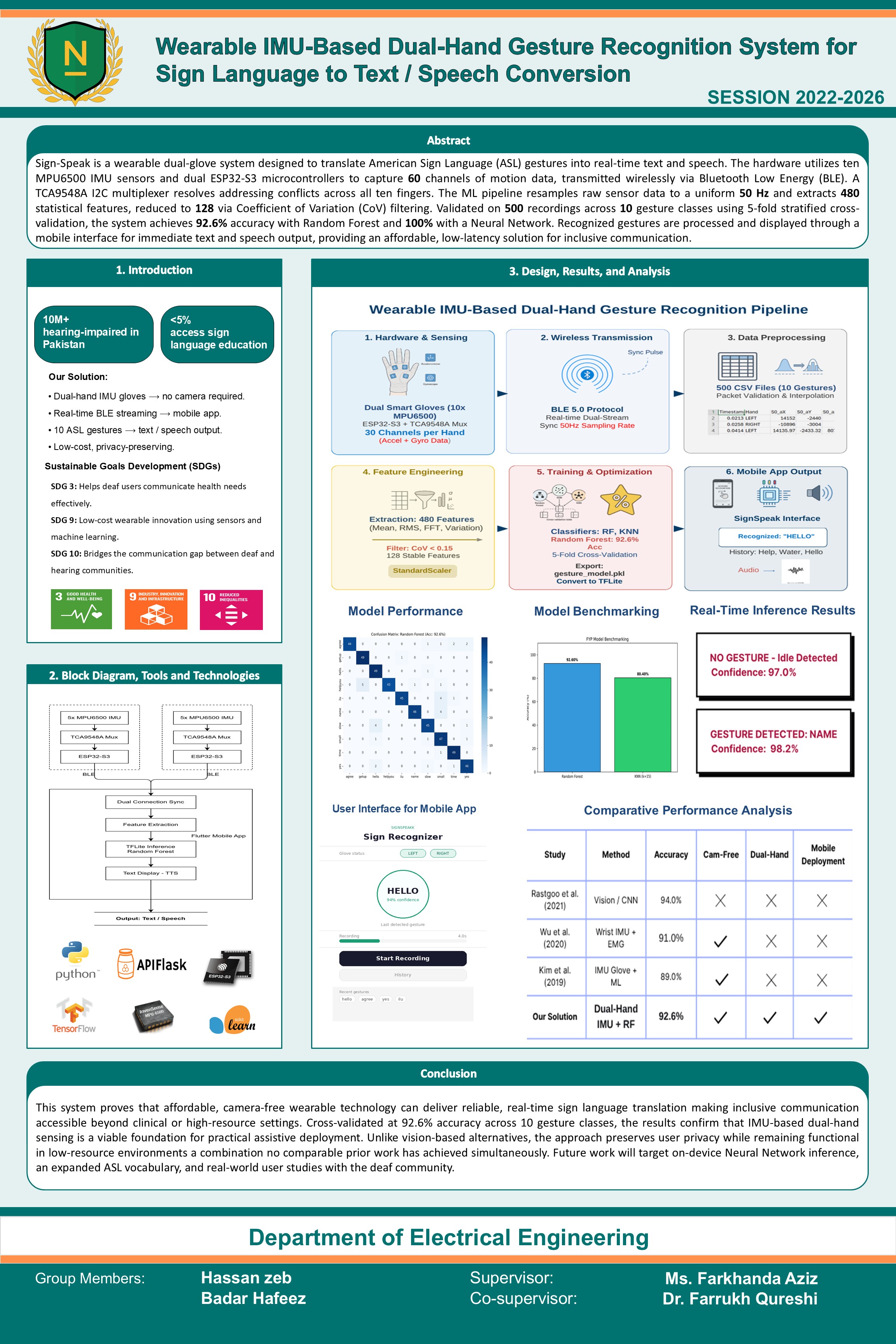

Effective communication between the deaf and hearing communities remains a persistent challenge due to the limited adoption of sign language among the general population. This project presents a wearable, IMU-based dual-hand gesture recognition system that translates American Sign Language gestures into real-time text output. The system employs two instrumented gloves, each housing five MPU6500 IMU sensors managed through a TCA9548A I2C multiplexer and driven by an ESP32-S3 microcontroller, capturing 60 channels of motion data across all ten fingers. Bluetooth Low Energy enables wireless transmission to an Android mobile application, which streams sensor data to a Flask API server where a feature engineering pipeline extracts 480 statistical features, subsequently reduced to 128 stable features through Coefficient of Variation filtering. A Random Forest classifier performs gesture classification, achieving 92.6% accuracy validated through 5-fold stratified cross-validation on a custom dataset of 500 recordings spanning 10 ASL gesture classes, with predictions returned to the app for immediate display. The proposed system offers a camera-free, privacy-preserving, and low-cost assistive solution suitable for low-resource environments, with future work targeting expanded vocabulary, on-device inference, and real-world evaluation with the deaf community.

Tools: MPU6500 IMU, ESP32-S3, TCA9548A I2C Multiplexer, BLE 5.0, Python 3.10, Scikit-learn, NumPy, SciPy

Department: Department of Electrical Engineering

Poster